|

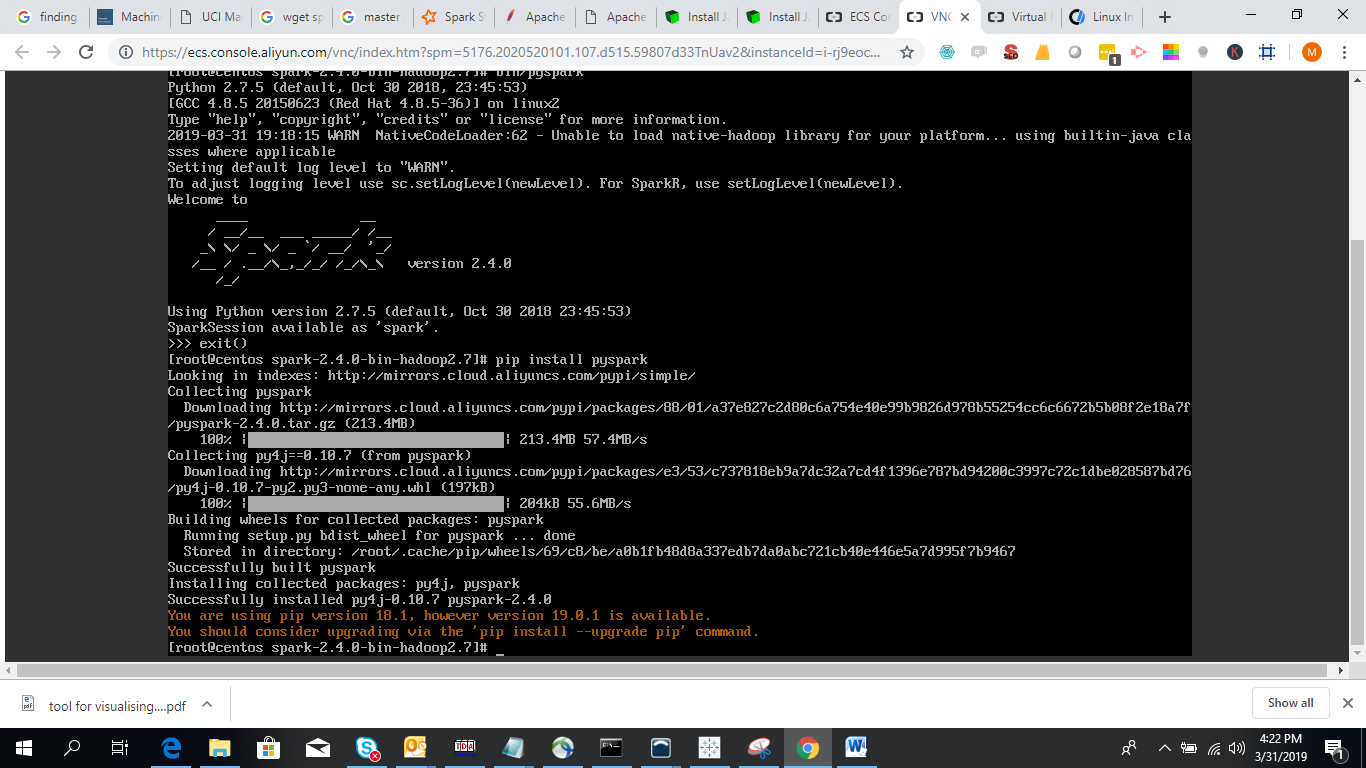

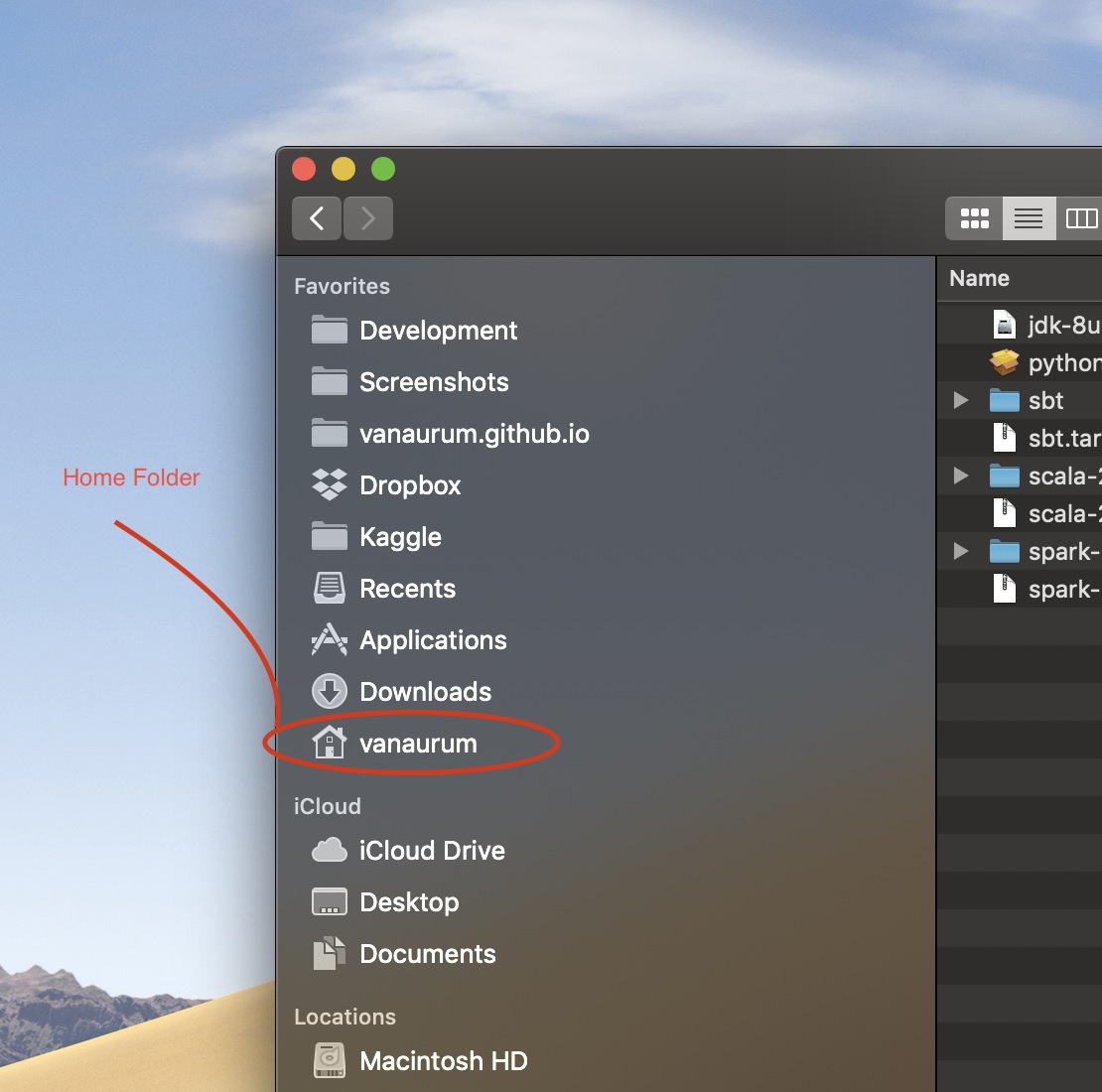

There is no doubt that there are many steps to set up the delta lake environment locally, but it is worth having the possibility to explore this amazing piece of technology on your own machine. Note: As previously mentioned, values in the table above might differ due to the 'randomness' in the data generation.įinally, we have reached the end. Here, we delve into how to seamlessly integrate Spark and Jupyter Notebook on your MAC, turning it into an efficient working environment. Run read_table.py in order to see how many people there are in every state: python read_table.py Download file read_table.py from GitHub:Ħ. json file(s) in _delta_log folder, which contain a transaction log, and set of. For someone who is new to Spark land and has no idea of how to get started on installation, I have composed a sequence of steps that should align you in the right direction. peopleĭelta table consists of two parts. For Python users, PySpark also provides pip installation from PyPI. Note: Specific records will look differently to ones above due to the 'randomness' in the dataset creation.ĭisplay the content of the people folder: tree. Installation ¶ PySpark is included in the official releases of Spark available in the Apache Spark website. The following table should be displayed as a result: Run create_table.py python create_table.py Download file create_table.py from GitHub:ģ. Install mimesis which is used to generate fake records: pip install mimesisĢ. In order to check whether Delta Lake with PySpark work as desired, create a dataset with fake records of 1 million people and save it as a Delta table. Then, import the data from the newly created table and calculate the number of people in each state, as per the instructions below:ġ. Install Delta Spark: pip install delta-spark=2.2.0 Install PySpark: pip install pyspark=3.3.1ħ. My job will have me working majorly in apache Spark (py spark) and data etl. I am looking to get a MacBook Pro with m1/m1 Pro chip for position as a data engineer. Create a virtual environment: conda create -name delta_env python=3.10 -yĪctivate delta_env environment: conda activate delta_envĦ. I have looking for this post and most I found are around a year old, so wanted to ask here. Install Apache Spark: brew install apache-sparkĥ. Install Java: brew install the JDK with the system Java wrappers: sudo ln -sfn /Library/Java/JavaVirtualMachines/Ĥ.

Install Python (Miniforge): brew install -cask miniforgeĪfter the installation run the following to setup the shell: conda init "$(basename "$")"ģ. Install Homebrew: /bin/bash -c "$(curl -fsSL )"Ģ. The steps to set up Delta Lake with PySpark on your machine (tested on macOS Ventura 13.2.1) are as follows:ġ. It brings business intelligence (BI) and machine learning (ML) workloads under one roof.Įven though #Databricks offers a great experience on #Azure, #AWS and #GCP, some of you might have a desire to hack locally and explore PySpark and Delta Lake purely as open source projects. Im taking a machine learning course and am trying to install pyspark to complete some of the class assignments. Today, data warehousing no longer relies only on proprietary software and data lakes are not perceived as data landfills. There is no doubt that, when #Databricks announced the open sourcing of the Delta Lake project in 2019, the industry changed forever.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed